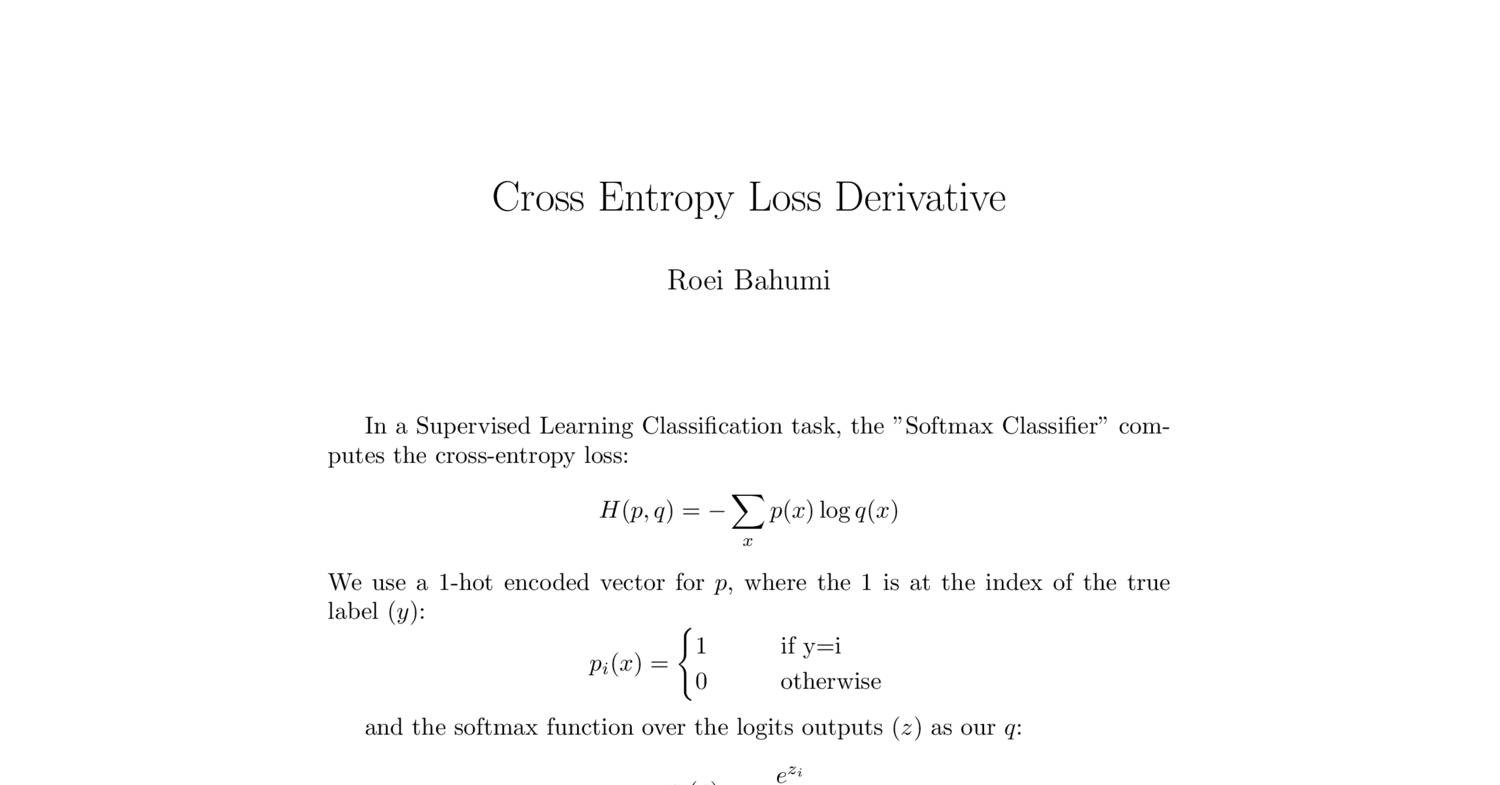

This simply changes the cross entropy computation to -(0.4*log(0.3) + 0.6*log(0.7)).įinally, you can simply average/sum these per-pixel cross-entropies over the image. This is what most of us are familiar with. Cross-Entropy as Loss Function Cross entropy is broadly used as a Loss Function when you optimizing classification models. Cross entropy loss is often used when training models that output. This is the loss function used in (multinomial) logistic regression and extensions of it such as neural.

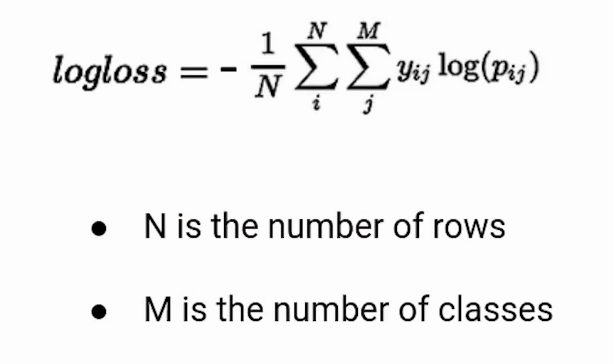

From the releated issue ( Where does torch. So far, I learned that, torch.nn.funcional.py calls torch.C.nn.crossentropyloss but I am having trouble finding the C implementation. Hi, I would like to see the implementation of cross entropy loss. It quantifies the difference between the predicted probability distribution and the actual or true distribution of the target classes. Log loss, aka logistic loss or cross-entropy loss. hwijeen (Hwijeen Ahn) February 9, 2022, 1:55am 1. This loss looks will look like loss - (y log (y) + (1- y) log (1 y)). Cross entropy loss, also known as log loss, is a widely-used loss function in machine learning, particularly for classification problems. We will rst look at binary cross entropy loss and learn how Focal loss is derived from cross.

Lets look at how this focal loss is designed.

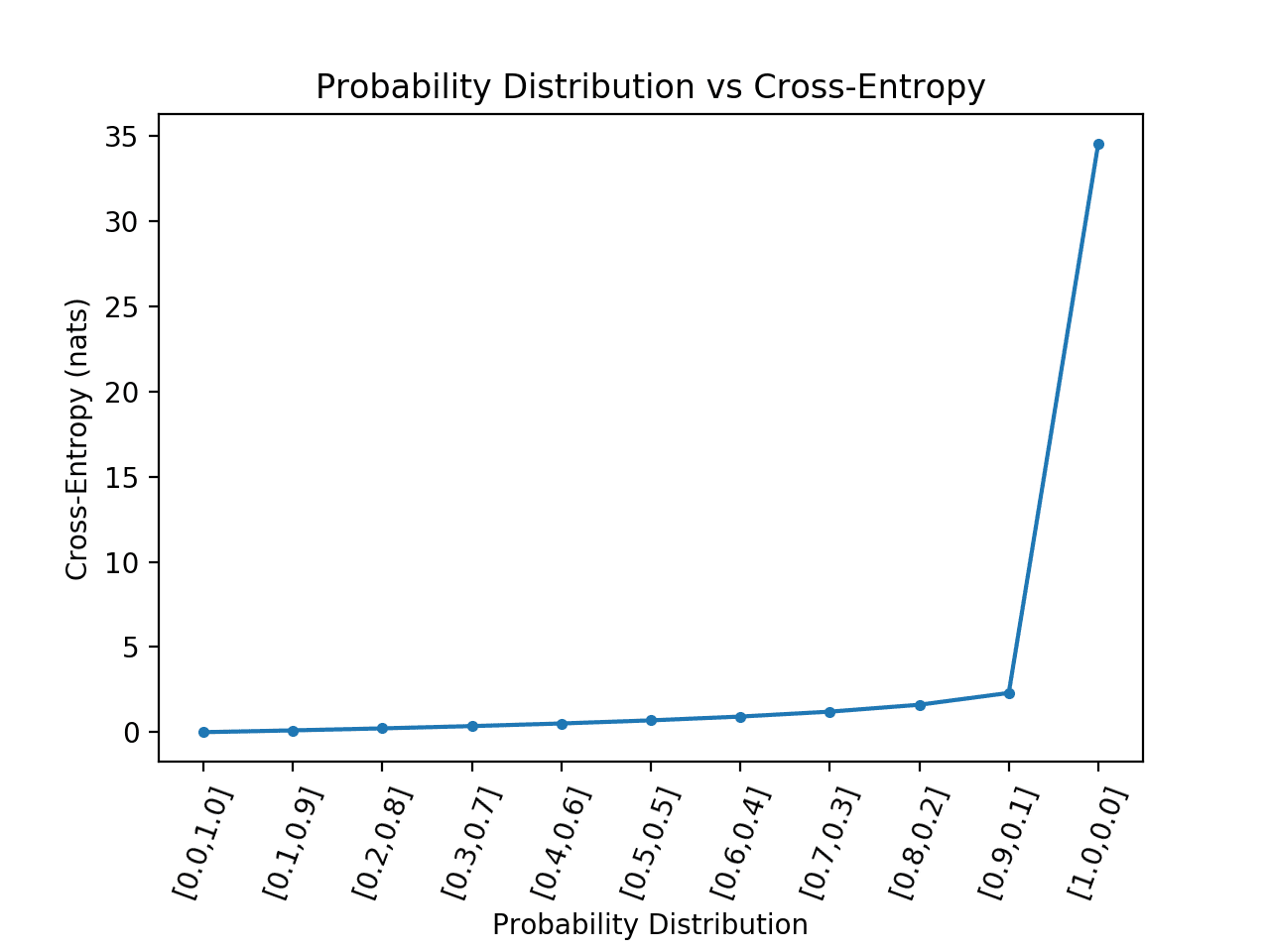

It works well for highly imbalanced class scenarios, as shown in g 1. For binary classification where ‘yi’ can be 0 or 1. It down-weights the contribution of easy examples and enables the model to focus more on learning hard examples. Let's say your target pixel is actually 0.6! This essentially says that the pixel has a probability of 0.6 to be on and 0.4 to be off. This is the loss term which we generally call as log-loss as this contains log term. The full formula would be -(0*log(0.3) + 1*log(0.7)) if the true pixel is 1 or -(1*log(0.3) + 1*log(0.7)) otherwise. Abstract: Cross-entropy is a widely used loss function in applications. If the target pixels would just be 0 or 1, your cross entropy for this pixel would either be -log(0.3) if the true pixel is 0 or -log(0.7) (a smaller value) if the true pixel is 1. The cross-entropy operation computes the cross-entropy loss between network predictions and target values for single-label and multi-label classification tasks. Basically, for my loss function I am using Weighted cross entropy + Soft dice loss functions but recently I came across with a mean IOU loss which works, but the problem is that it purposely return negative loss. This essentially says that your model estimates p(pixel=1) = 0.7, and accordingly p(pixel=0) = 0.3. Hello everyone, I am currently doing a deep learning research project and have a question regarding use of loss function. With the above as a reference, let's say your model outputs a reconstruction for a certain pixel of 0.7. Putting it all together, cross-entropy loss increases drastically when the network makes incorrect predictions with high confidence. Elliott Gordon-Rodriguez, Gabriel Loaiza-Ganem, Geoff Pleiss, John Patrick. The formula of this loss function can be given by. See the cross entropy definition on Wikipedia. Uses and Abuses of the Cross-Entropy Loss: Case Studies in Modern Deep Learning. The Binary cross-entropy loss function actually calculates the average cross entropy across all examples. In that case your target probability distribution is simply not a dirac distribution (0 or 1) but can have different values. In what you describe (the VAE), MNIST image pixels are interpreted as probabilities for pixels being "on/off". Unlike Softmax loss it is independent for each vector component (class), meaning that the loss computed for every CNN output vector component is not affected by other component values. Label_smoothing(y::Union) with eltype Bool:Ġ.43989f0 source entropy measures distance between any two probability distributions. It is a Sigmoid activation plus a Cross-Entropy loss.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed